Claire Blackshaw

I’m a queer Creative Programmer, with a side dish of design and consultancy, and a passion for research and artistic applications of technology. I work as a Technical Director at Flammable Penguins Games on unannounced title.

I've had a long career in games and I still love them, also spent a few years building creative tools at Adobe.

Love living in London.

When I'm not programming, playing games, roleplaying, learning, or reading, you can typically find me skating or streaming on Twitch.

Latest Video

Better PRs: Voting Panels, AI Review, and Stack Your Damn Commits

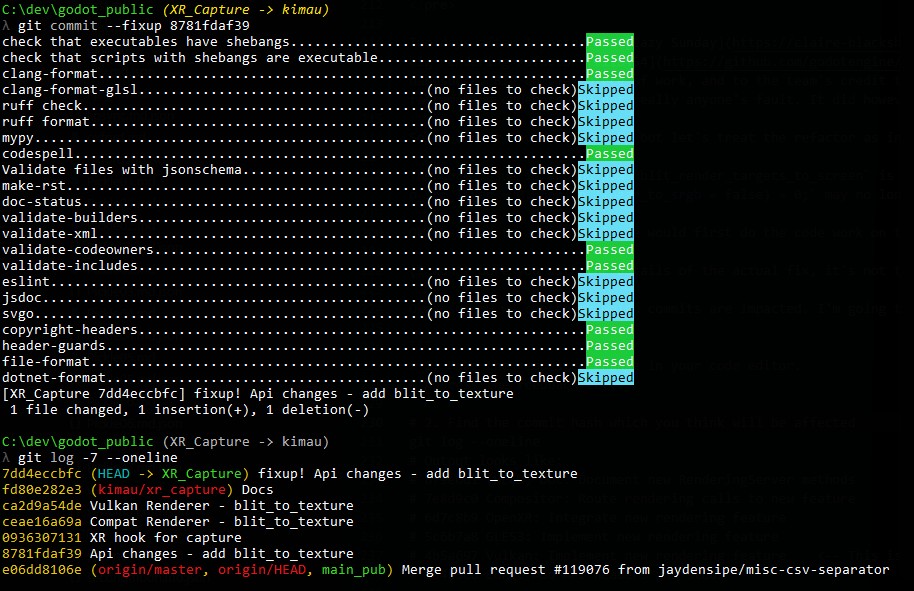

TLDR: Post GodotCon brain dump. First and foremost this is a people problem, process and tools just help us scale our collective fallibility. Three angles on the rest: Juan's anon voting panel proposal (good idea, needs guardrails), AI review (yes, do it, it's already standard at commercial studios), and stack your bloody commits (the only fix you can ship today). Worked example with git commit --fixup and git rebase --autosquash using my XR capture PR.

My brain is buzzing post GodotCon. Fabulous event with a lot of good conversations so apologies if my blogging is a bit prolific for a while. I wanted to talk a bit about the biggest challenge facing Godot which is PR overload and reviews.

First and foremost this is a people problem. Process and procedure are mostly about how to fairly scale this and make a task too large to manage more tractable while adding some overhead. PRs are great for low trust environments but obviously on a small trusted team they are often a hindrance. Much like politics is harmful in a small group but essential for a large nation.

None of us are perfect. I've messed up many times in this process. But that very fallibility of humans is why these processes and tools are useful and also why large organisations benefit from lag and slow reactions.

To break this down I want to talk about a few things

- Describe the issue

- Juan's voting proposal

- Why AI code review is a good idea

- Stacked commits a better way to structure PRs if you only read one thing make it this

The PR Overflow

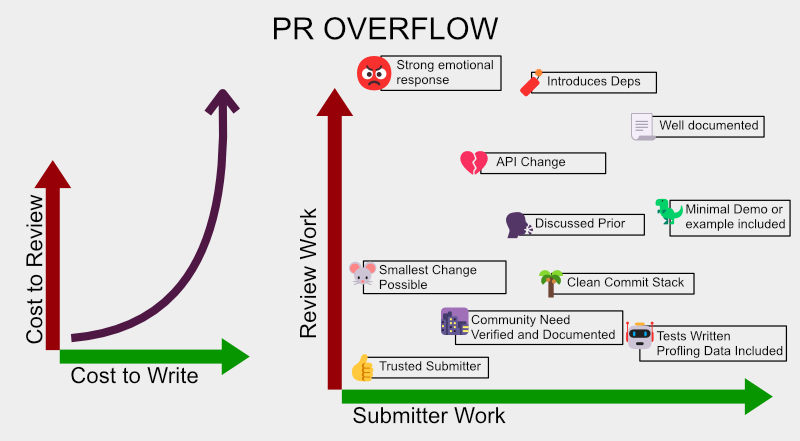

The two axes that drive the overload: review cost grows non-linearly with code complexity, and the things that make a PR cheap to review are exactly the things submitters can choose to invest in upfront.

The core issue facing Godot simply can be expressed as:

- It is more fun to write code than read it

- Open Source projects are getting flooded with low quality PR, esp in a post AI world

- Reviewing code requires you to smarter than writing it and it takes more effort to review than write

- Godot suffers from a lack of professional gamedev reviewers

- A lot of Godot contributors don't have commercial engine experience

- Top tier contributors time is torn between core work and review

- The barrier to PR submission is low

- All the issues of online, social media and personalities

- By default PRs are ghosted to avoid social conflict and work

I've been very vocal about how painful PR submission has been for myself and how much money and time it has cost us. I still strongly believe in giving back to the community but for a wide range of reasons at least for the near future I see us as company pulling away from PR unless they are XR specific or small enough to be very low drag.

The core issues we as a company are hitting with Godot are very high drag and often involve larger code changes, so I foresee those as big time sinks in PR land I would prefer to prove them out ourselves before submitting them back. Though the issue is if more commercial devs with depth of experience are pulling back from it over time the quality of PR submission and solving hard problems is going down.

Long term I truly believe Open Source is the correct and best way forward and will result in better technical decisions. Though much like politics for it to work it needs to be somewhat slow by design.

Worth naming the root cause directly. There just aren't enough experienced people with time. And the dynamic compounds: the more experienced you are the less time you have to review, because experienced people are valuable and get hired to do work not review PRs from contributors they don't know. Open source projects rarely escape this until a large company starts depending on them and starts paying for reviewer time. None of what follows in this post fixes that scarcity. Voting panels, AI review, stacked commits, all of it is just trying to spend the limited reviewer attention we have more wisely.

Voting Panel

TLDR on his proposal:

Trusted reviewers are given the opportunity to assign scores in X categories which are then used in a weighted equation to produce an overall viability score to INFORM not gate the PR.

Juan gave an interesting talk about anonymous voting panels at GodotCon. Slides and recording weren't public at time of writing. While I largely agree with his proposal it is inherently technocratic way to solve a social issue and incorrectly implemented will lead to massive discrimination and dissolution of community.

His solution to ghosting is a lower effort system which protects individuals from retaliation.

To circle back: By default PRs are ghosted to avoid social conflict and work

The same reason you don't hear back from companies. The moment an opinion is expressed in writing it becomes a point to be contested. Also this is why companies ghost you because giving a reason assigns accountability and no response is the lowest friction solution.

People are hard and trying to remove people from the social equation is a knee jerk response which when well intentioned can erode community and when not well intentioned can become the tool of the worst instincts of humanity. You need to keep accountability and keep things people focused.

The issue with anonymous feedback in this kind of setting (judging people's contributions, not blind judging of work-on-merit) is well documented in the social-systems literature: it tends to worsen bias and discrimination rather than reduce it. Compare the figure skating compatriot-judge bias (Zitzewitz) with the more successful Hubble dual anonymous peer review. Without guardrails this could seriously isolate trusted reviewers, harden boundaries, and lead to cultish insular behaviour.

My suggestion is based on work I have done with grant communities allocating funds in government capacity but also private funds. The key solution tweaks are rather small:

- Full Audit Trail and Accountability

- Resolve with humans not math

- Built for appeal

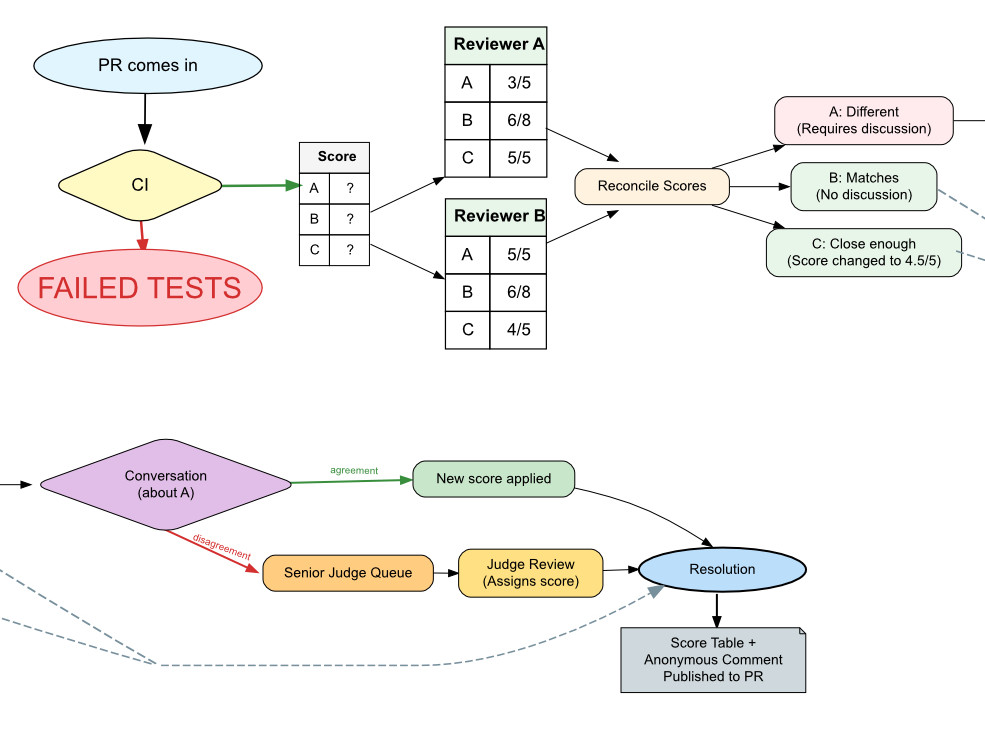

The full pipeline. CI is the first gate. Two anon reviewers score per category. Matches and close-enough scores resolve straight through. Divergent scores trigger a conversation, or escalate to a senior judge if no agreement is reached. The PR gets a final score table plus the anonymous comments published back, so the submitter has something to argue with.

Full Audit Trail and Accountability

The first step is you need to make it clear to all voters that they will be recorded as the voting individual and they must be prepared to defend that score and rationale. On formal review committees this also means for each scoring area clear guidance is provided as to what is being scored and examples of high/mid/low score.

Resolve with Humans Not Math

When 2 or 3 reviewers score a proposal, if any category diverges by more than X (normally around 5-10%) the reviewers need to agree on a consolidated score. Often in discussion the final score is not the average but actually judge A or B or C's score or an entirely new score. People share knowledge and a new group opinion is formed.

If no consensus can be reached the scores with comments and reasons are sent to a lead judge who assigns a final score. The combined and summarised comments on those scores are shared with the candidate. They are anonymous and the final opinion not work product. Providing them stops this being a cold number and gives social reasoning and a point to contest. The reviewers may have misunderstood or lack knowledge in an area.

Built for Appeal

If the candidate disagrees they may file a formal appeal. In a grant setting this is sometimes gated by an admin fee or other criteria. For Godot it might be reserved to a single appeal in a time period. The proposal goes to a single senior individual who can look it over and provide a yes or no on whether to open it for discussion.

If opened for discussion, anonymity must be removed and people need to talk in public. This removal of anonymity is critical for accountability and productive discussion. It provides public record and is often the best tool for spotting bad apples and surfacing systemic issues. It also becomes a documented discussion to inform future technical work and decisions. The matter is then resolved with a final decision and documented.

This is not as low effort as the simple voting system proposed but it achieves the following things

- Removes risk of increasing bias and discrimination

- Protects the initial reviewers from online trolls and rage

- Accounts for GDPR / FOI and similar processes which the initial proposal did not consider

NOTE:: I have partaken in these systems and helped discussing the design of similar systems but have not been involved in the final legal compliance setup, especially in Dutch non-profit context.

AI Code Review

Anyone involved in the world of open source will acknowledge that it's a fucking hellscape since coding AI have flooded the inboxes of every public project in recent years. It is an issue we are all still grappling with. I'm going to state some opinions here to calibrate the position I'm coming from.

Assumptions and Sidebar

I largely align with Linus Torvalds that AI is "90% marketing and 10% reality" (video). It's just another tool, and we need to focus on code quality, not origin. Ultimately, a human has to be responsible for every line of code. While a lot of AI-written code being produced today is low quality, in restricted and tight contexts it can be competitive with skilled humans (see DeepMind's work on matrix multiplication kernels for example) and importantly it never just drops a lazy LGTM. AI companies are largely devoid of ethics and we have large social structure issues to address, but AI isn't going anywhere. Even if a financial crash happens, it will only accelerate things. It's already becoming an essential part of commercial workflows.

Quick disclaimer: These takes may seem controversial and defending them is a whole other article entirely. I want to state clearly that our games include no generative AI, though we have coded our own constraint-based solver and use the AI tooling everyone does. Microsoft's IntelliCode (the ML based ranker on top of IntelliSense) has been around since 2018, but the broader point is that helper tooling has been a staple of game and engine dev work for a long time. Visual Assist for instance has been a fixture in C++ gamedev for over two decades, doing the same kind of "smart suggestion and refactor" work, just rule based not statistical. ML or not, the helper-tech is not new. The fine line distinctions are hard and nuanced, and we should be holding people to account and having these discussions.

That being said a purity doctrine is unhelpful because we need to figure out how to exist in the real world. I'm more sympathetic to a religious person who disagrees with my existence as a queer woman who is feeding people and doing charitable work than I am to a corporation giving lip service to pride while destroying us in class warfare. IT IS COMPLEX!

CI tooling - first line defence

Look the simplest step is CI tooling if a PR is not compiling or we have metrics we can run that don't involve AI to give them a little traffic light this should ALWAYS be our first line of defence. No one should even look at a PR until it passes continuous integration checks.

Why AI Code Review

AI Code review is really good at a few things and is already used by a wide range of game studios and professional companies who have a higher code standard than 99.999% of Godot contributors and users.

The key tool AI code review provides is a written opinion with additional information which can be actioned or ignored by humans.

Strengths

- Spots typos, easy errors and misalignment of comments and naming with behaviours

- Can check against code style natural language documentation

- Can provide immediate feedback on the PR without human cost

- Can pre-interrogate the PR with the obvious questions a reviewer would have raised, shifting the legwork onto the submitter where it belongs

The typos issue is probably the biggest time savers in companies. I know of several who are now running AI review on every commit. Getting AI out of peoples way so it doesn't interfere with the code flow but then using it in the background to spot these little mistakes is a huge benefit and catches a lot of small problems or inconsistencies before they go any further.

The fact that it reviews every line without ever doing a lazy I know the author "looks good to me, thumbs up" review is of huge value in catching issues.

Also the ability to point it at natural language documentation and reference allows it to enforce some softer rules which are much harder to encode into CI tooling.

The pre-interrogation point is worth dwelling on. A reviewer's time is the bottleneck so anything we can do to push obvious questions back to the submitter (where the cognitive context already exists) is a win. AI is great at "have you considered X", "this looks like it might break Y", "is this consistent with how Z is done elsewhere in the engine". The submitter answers those before a human ever opens the PR. The handful of trusted reviewers spend their finite attention on the actually-hard stuff. The more burden we can shift from the small reviewer pool to the much larger submission base, the better.

Then the final and most useful case I have seen from commercial studios which have their own tech stack. The reality is they have years of solving similar problems and a history of taste and preference embedded in the code. AI can help enforce that and say: Hey you are trying to access this data but the common pattern in the engine is actually to do XYZ. This is extremely helpful with new hires in commercial setting but even more so in a public code base with new contributors or people working on parts of the engine outside their normal remit.

But Doesn't This Cost Money

Worth heading off the obvious objection. Godot is MIT licensed open source. The entire codebase is already being scraped and trained on by every AI lab on the planet, that ship has long since sailed. On top of that most of the major code review products (CodeRabbit, Greptile, Cursor's review, etc.) offer free tiers for public open source projects, and the more-renowned ones often get bumped to better tiers on request. Cost is much less of a barrier than people assume.

Stacked Commits

The most useful bit of feedback I would give that you can action today: STACK YOUR COMMITS.

Your PR commits should be a clean version of development that should be easy to review. There is a concept sometimes called Atomic Commits, Stacked Diffs or Storytelling with Git. Core idea is to

- Guide the reviewer through the code

- Isolate technical areas of responsibility

- Easier rollbacks and cherry picking behaviour

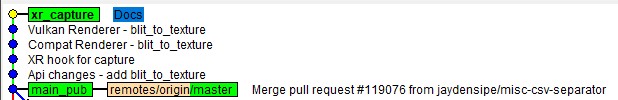

The actual final state of my xr_capture branch after the cleanup. Each commit is one logical layer: API surface, XR hook, the two renderer backends, then docs. Easy to review top-down, easy to cherry-pick a single layer, easy to fixup any one of them without disturbing the rest.

So you're done working on your PR let's create a clean commit story.

I'm going to use an existing PR of mine to help explain this. It is the PR described in my blog post Lazy Sunday: Feedback Screens, Viewport Bugs, and Giving Back to Godot

Ensure you have the latest code from the upstream repository

git fetch upstream

Reset your branch pointer to the base branch.

--soft keeps all your changes staged in the index ready to be re-committed in clean chunks.

git reset --soft upstream/master

Now if I do a diff I see all the files changed in this commit.

At this point many people will make a single large commit, this is a mistake unless the change is small.

# 1. API surface

git add servers/rendering/rendering_server.h

git add servers/rendering/rendering_server.cpp

git commit -m "Api changes - add blit_to_texture"

# 2. XR hook

git add modules/openxr/

git commit -m "XR hook for capture"

# 3. Compatibility (GLES) renderer

git add drivers/gles3/

git commit -m "Compat Renderer - blit_to_texture"

# 4. Vulkan renderer

git add servers/rendering/renderer_rd/

git commit -m "Vulkan Renderer - blit_to_texture"

# 5. Documentation

git add doc/classes/RenderingServer.xml

git commit -m "Docs"

# 6. Force push to update the PR with the new, clean history

git push origin XR_Capture --force-with-lease

By breaking this up you can up front see the areas of the commit and identify the work the commit is doing.

Note: The larger block of text and full explanation should be included in the PR. For context here is my PR message.

As discussed in the XR channel, we had issues on our version of the engine capturing the viewport. This was required for a feedback system in Augmental Puzzles. Our requirements were: Capture the full eye Minimal overhead Avoid any frame hitch on XR We implemented this purely as a Vulkan capture similar to how the screen blit is done, though in this PR I have expanded it to include GLES so I could move it into the base compositor. I have not tested the GLES side as I'm less familiar with that part of the code, so it could definitely use a review by someone more familiar with that side. I've sketched out rough docs and a demo. Demo project using the feature: blit-capture-demo.zip AI Disclosure: Claude Opus was used to review this code and help me with the GLES conversion (as I'm less familiar with Godot's GLES implementation), though I have reviewed all the code and there is no direct slop in here. I also used it to write a first draft and spell-check my edits on the docs.

While I was waiting for review, a big rendering refactor #116454 landed which pulled apart RenderingServer and moved a pile of enums into a new RSE namespace. This is huge PR dropped pretty fast and did a lot of renames and restructure which broke a lot of inflight PR, including mine, and will makes cherry picking harder given the scale of changes. Valuable work and the team did flag the in-flight breakage in the PR description. It's possible my Lazy Sunday post drew attention to the area or possibly it was just timing, either way I want to be careful not to read intent into it. What it did do is block my PR until I rebased, which gave me a perfect excuse to walk through the fixup workflow.

So treat this as implicit feedback that the area I'm touching is moving around. The wiring around blit_render_targets_to_screen shifted, which means our virtual void blit_to_texture(RID p_src_texture, RID p_dst_texture, uint32_t p_src_layer = 0, bool p_linear_to_srgb = false) = 0; may no longer sit in quite the right place.

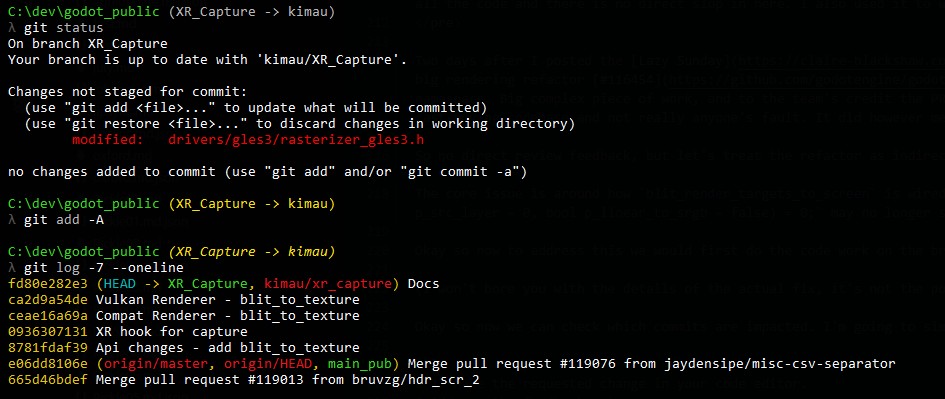

Okay so now to address this we would first do the code work on the branch to make the fixes.

(I won't bore you with the details of the actual fix, it's not the point of this post.)

Here is the actual workflow on my branch.

Step 1: make the fix and stage it. In this case the affected file was drivers/gles3/rasterizer_gles3.h.

Pre-fix state. Clean stack with Docs at HEAD. Edit the file, git add -A.

Step 2: create a fixup commit targeting the original. I want this fix folded into the Api changes - add blit_to_texture commit (hash 8781fdaf39).

git commit --fixup 8781fdaf39

Pre-commit hooks pass and Git creates a fixup! commit at HEAD. The commit message is auto-prefixed with fixup! and references the target.

Step 3: autosquash rebase, then push.

git rebase -i --autosquash main_pub

git push -f

The fixup gets folded into the target commit automatically. New hashes everywhere because rebase rewrites history. Push goes up with -f (or --force-with-lease if you want to be safer about overwriting other peoples work).

Quick note on main_pub: that is just my local tracking branch for upstream master. Substitute whatever you call yours.

On the editor: with --autosquash plus -i Git opens the rebase todo file with your fixup already moved underneath the target and marked fixup. Save and close, done. Drop the -i if you trust it to just go.

Now the PR can be reviewed and any approvals or comments on the commits which were NOT touched will be left intact, so only the changed work needs re-review.

A note on this not being a hard rule. Stacking is a soft norm, an exemplary practice for those who can. We absolutely should not gate newcomer PRs on git mastery, and a single big PR with thoughtful explanation is still infinitely better than no PR. Where I think it shines is when experienced devs lead by example, write clear guides, and let the standard drift up over time. Also git rebase --autosquash is amazing on focused PRs and gets fiddly on epics, so use the right tool for the size of the work.

Some may think we could automate this, but ultimately splitting your commits and framing the work is an act of narrative storytelling. Something that is soft and very human and even top experts in intelligence will acknowledge is unlikely to ever be crossed because it is based on shared cultural reference and experience. You are trying to relate to the person, connect, and help them understand what was going on in your head as you typed out your thoughts into code.

Conclusion

PR overload is the boring crisis at the heart of every successful open source project. Godot is hitting it hard right now and there is no single fix.

What I'm advocating for is a triple attack:

- Social fix adopt Juan's voting panel idea but build it grant-committee style with audit trails, consensus discussion, and an appeals process. Anon votes on humans are how good intentions become discrimination.

- Tooling fix let AI do the boring review work (typos, style, taste enforcement) after CI but before human eyes. Catches the small stuff so reviewers can spend their finite attention on things that need a human brain.

- Contributor fix stack your commits. Make your PR reviewable. Use

git commit --fixupandgit rebase --autosquashso addressing feedback doesn't blow up the parts that already passed.

The contributor fix is the one you can do today, alone, without waiting on anyone. So please, stack your commits. Future you, and every reviewer who has to read your work, will thank you.

One more thing for the submitter side. Bastiaan put it well in a chat after I'd shared a draft of this: a PR is a gift. If they take it great, if not you still got the work done for yourself. He's had PRs sit for a year or two and then suddenly become the springboard another contributor needed (his VRS work that Dario eventually carried over the line is the example). It's a healthier mode to operate in than expecting every PR to be reviewed and merged on your timeline, and it makes the inevitable ghosting much easier to live with. Push your work upstream, then move on with your life.

In my recent GodotCon talk I highlighted that we would likely be doing fewer PRs as they took 3-5x the effort of just internally delivering the feature and sharing it unpolished. That extra effort is the gift wrapping. It takes care and time. It is understandable if you don't have that time. The act of wrapping your gift, putting a card on it and presenting it with care is often appreciated more than the contents of that gift.

I love this engine and this community. I want to keep giving back. The reason I'm writing all this is because I want the giving back to be sustainable on both sides.

Links

Related posts

- Lazy Sunday: Feedback Screens, Viewport Bugs, and Giving Back to Godot the XR capture PR used as the worked example

- Shipping VR in Godot: Troubles, Victories and Reality the GodotCon talk this post is a follow up to

- Godot's Rough Patch API churn and why cherry-picks matter

PRs and commits referenced

- Godot PR #116314: Capture XR to Texture the PR being worked on in the example

- Godot PR #116454: Decouple RenderingServer the big rendering refactor that landed mid-flight

Voting panel proposal

- Juan's GodotCon talk

- NIH on dual anonymous proposals being harder than expected (Science)

- Pivotal voting and group decisions (PNAS 2022)

- Asenbaum (2018) anonymity is frequently leveraged by groups to exclude, intimidate, and uphold systemic discrimination against marginalized voices in the public sphere.

- Scott & Barlett (2023) the belief in untraceable anonymity emboldens individuals to bypass normal social filters and engage in cyber-racism and toxic online harassment.

- Wu, Lin, & Shih (2017) "dissociative anonymity", the feeling of complete detachment from one's real-world identity, is a primary trigger for aggressive and highly biased online behaviour.

- Zitzewitz (2012) removing transparency in evaluation systems can backfire, as demonstrated in figure skating where anonymous judging led to an increase in nationalistic favoritism.

References

- Asenbaum, H. (2018). Anonymity and democracy: Absence as presence in the public sphere. American Political Science Review, 112, 459-472. https://doi.org/10.1017/s0003055418000163

- Scott, J., & Barlett, C. (2023). Understanding cyber-racism perpetration within the broader context of cyberbullying theory: A theoretical integration. Children, 10, 1156. https://doi.org/10.3390/children10071156

- Wu, S., Lin, T.-C., & Shih, J.-F. (2017). Examining the antecedents of online disinhibition. Information Technology & People, 30, 189-209. https://doi.org/10.1108/itp-07-2015-0167

- Zitzewitz, E. (2012). Does transparency reduce favoritism and corruption? Evidence from the reform of figure skating judging. Journal of Sports Economics, 15, 3-30. https://doi.org/10.1177/1527002512441479

AI review

- Linus Torvalds on AI: "90% marketing and 10% reality" (TFiR interview) and the video

- Visual Assist by Whole Tomato the long-standing C++ gamedev helper plugin

Git tooling

git rebasedocumentation the official reference for--autosquash- Auto-squashing Git Commits (thoughtbot) a friendly walkthrough of

--fixupand--autosquash - Stacked Diffs (Pragmatic Engineer) the broader workflow context

Find me

Current Hobby Projects

Recent Hobby Projects

-

VR Physics Game

Networked Physics VR game -

TextDB

Text log file based Key Value Database -

LLM ADHD Friend

My experiments in LLM Assistant -

Website Update

Doing a big refresh on the website -

Skate All of August - 2020

Skate & Film Challenge -

Dreams Tutorials and Shorts

Education Series -

Game Dev | Null

True Stories from Making Games -

Raycaster

Simple playground to try some Raycaster coding -

InkPub

Web Writing and Publishing Software -

UXPlayground

Playground to try some UX ideas out on

Social Bits and Bobs

Website: Claire Blackshaw

Flammable Penguins: Small Press Publishing

Mastodon: @kimau@mastodon.gamedev.place

BSky: @EvilKimau

Twitter: @EvilKimau

YouTube: YouTube

LinkedIn: Claire Blackshaw

Twitch: Kimau

Github: Kimau

TikTok: @EvilKimau

Tumblr: Forest of Fun

Book list: Good Reads